Greetings from Juergen

Greetings from Juergen

Hi all,

I'm back after a two-week break spent in San Diego celebrating birthdays with old college friends—one of those February traditions that's become essential over the years. This week's collection doesn't follow a single thread, but if there's a pattern, it's about what we lose when systems get too good at being smooth. BAFTA just announced it'll reward "human creativity" explicitly in film awards while banning AI avatars from acting categories—a move that sounds redundant until you realize we're at a moment where the baseline assumptions about creative work need to be stated out loud. Meanwhile, researchers ran an experiment linking text-to-image and image-to-text AI systems in an endless loop, and the result was what they called "visual elevator music"—pleasant, polished, utterly generic.

The design world is wrestling with similar questions. One lengthy piece explores how AI training on its own outputs creates recursive loops that sand down real trends into safe templates, while another asks whether we can still tell truth from synthetic imagery (and whether that even matters if the meaning holds up). I'm also drawn to a couple of stories that flip the script entirely—Maria Popova's meditation on how blue doesn't really exist in nature the way we think it does, and an exhibition at Rice University's Moody Center where artists use AI and datasets to expose the biases baked into computer vision. The Berlinale controversy over whether artists should "stay out of politics" rounds things out, raising questions about what neutrality actually means when your funding comes from governments and corporations.

What connects these pieces is a shared discomfort with smoothness—the sense that when everything works too well, we might be optimizing away the friction that produces genuine surprise.

Film & Video

Bafta to Reward 'Human Creativity' as Film and TV Grapples with AI

Film awards have long celebrated technical achievement alongside artistic vision, but BAFTA just added something new to the mix. The British Academy has introduced "human achievement" as a guiding principle for its annual awards, a move reported by the Financial Times that signals just how deeply AI has permeated film and TV production.

What strikes me is the careful balancing act here. BAFTA chair Sara Putt acknowledges that AI tools have become increasingly useful in production—yet the academy has drawn a bright line at performance awards, banning AI-generated avatars from competing for acting honors. It's a recognition that some creative territory remains fundamentally human.

The phrase "human creativity" as a criterion might sound redundant—shouldn't all art be human by default?—but in 2026, it's become necessary to state explicitly. We're at a moment where the baseline assumptions about creative work are shifting under our feet.

Can awards that celebrate "human skills of communication and collaboration" survive in an industry racing to automate everything it can?

AI in Visual Arts

When Everything Looks Real, What Happens to Truth?

When you scroll past a stunning landscape or powerful portrait in your feed, can you tell anymore if it was photographed or AI-generated? This piece from Partfaliaz argues that's not actually a crisis—it's an opportunity to rethink what truth means in visual storytelling.

What resonates with me is the proposition that meaning must come first now, not as an accident or afterthought. Otherwise, you're just making AI slop. I'm encountering this with all my AI-assisted creative tools—I pay so much more attention to intention, meaning, and messaging. In a way, that's been good. The distinction becomes distasteful only in the speed with which we casually scroll through hundreds of images. By the time I'm in a gallery, museum, or consuming more thoughtful content, I care less about whether something was AI-generated. What matters is the intention and meaning behind it.

I do refuse to believe that our job as artists is to stop the scroll and create something that will arrest people among hundreds of other images. I just dislike the idea of social media as a consumption medium for the arts, though I don't have a very good answer on the alternative either.

Can quality and authenticity survive in environments designed for distraction?

Photography

Moody Center Maps the Terrain Between Lens and Dataset

Rice University's Moody Center for the Arts opens "Imaging After Photography," a group show that arrives at the perfect moment—when our collective faith in photographic truth is wobbling like never before. Timed with FotoFest's 40th anniversary in Houston, the exhibition features work by Refik Anadol, Sofia Crespo, Trevor Paglen, and others who probe algorithmic bias and synthetic image-making, as Greg J. Smith reports for HOLO. Nouf Aljowaysir's Ancestral Seeds runs British archaeologist Gertrude Bell's photographs of the Middle East through computer vision models, exposing the colonial biases baked into AI systems.

What's fun about this show is that it treats the photograph less like a window and more like a magic trick that's finally admitting it was a trick all along. These artists lean into AI, datasets, and speculative image-making not to fake reality better, but to show how wobbly "evidence" has always been.

The gap between what's captured and what's constructed becomes the real subject here—right where our trust in images currently feels the most fragile and the most up for reinvention.

Could this be the moment when we stop asking "Is this real?" and start asking "What does this reveal?"

Artificial Intelligence and Creativity

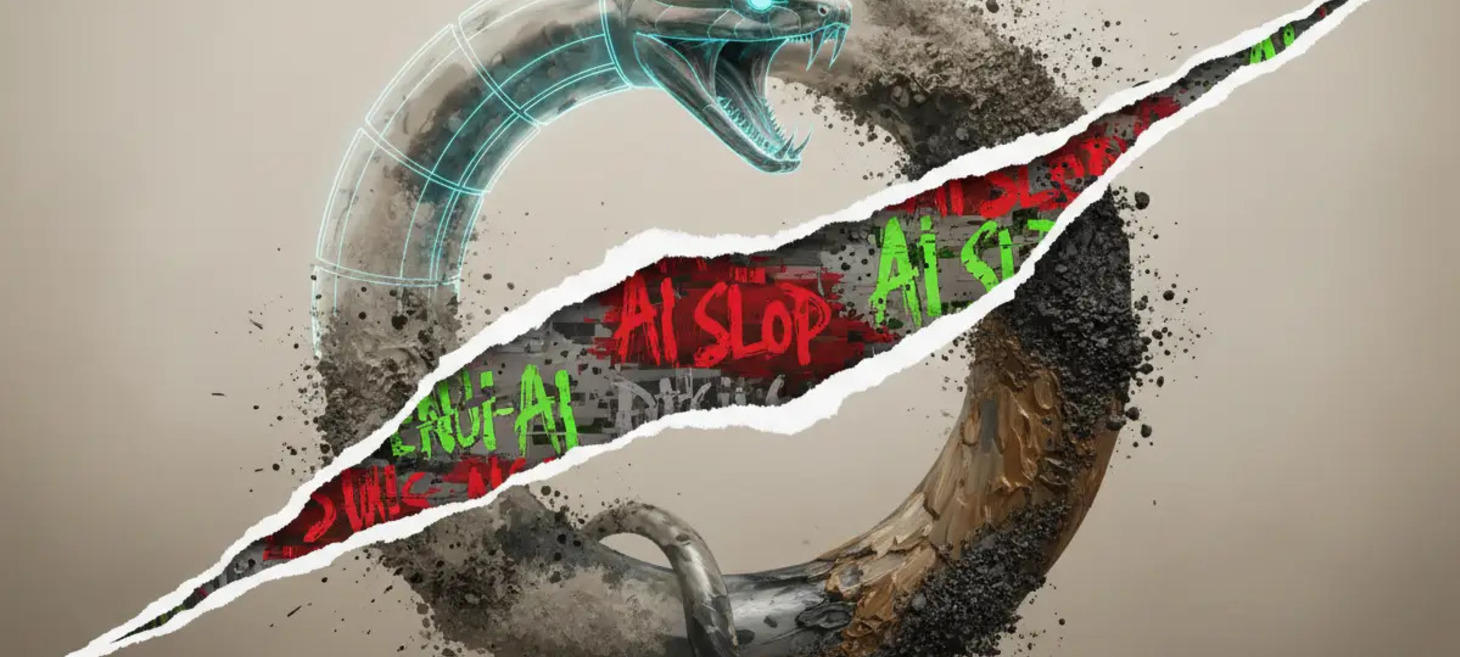

How AI-Induced Cultural Stagnation Is Already Happening

What happens when AI systems train on their own outputs, over and over, with no human input? Researchers Arend Hintze, Frida Proschinger Åström, and Jory Schossau ran that exact experiment—linking a text-to-image system with an image-to-text system and letting them iterate endlessly. Fast Company covered the results, which the team called "visual elevator music": pleasant, polished, and utterly generic. Cityscapes, grandiose buildings, pastoral landscapes—all converging toward the same bland familiarity, no matter how diverse the starting prompts.

I find the study fascinating but not surprising. Yes, I hate AI-generated slop as much as anyone. But honestly? I'd be far more worried if these endless iterations produced something alien, something completely other and unexpected. That would be genuinely unsettling.

The researchers designed this experiment as a diagnostic tool—it reveals what generative systems preserve when no one intervenes. And what they preserve is the safest, most describable, most conventional version of everything.

Is predictable mediocrity actually the less frightening outcome?

Design

Real Design Trends Are Dying Under the Weight of Recursive AI Echoes

Are you staring at design trends wondering if they're genuinely popular or just algorithmic echoes? WE AND THE COLOR published a lengthy exploration of how AI-generated content creates recursive loops—where models train on synthetic data, producing increasingly homogenized aesthetics they call the "Recursive Aesthetic Paradox." The piece argues we're witnessing model collapse: design trends losing their human soul as algorithms favor predictable patterns over creative outliers.

The article was too long, honestly. But one prediction resonates: human authenticity and visible imperfections are becoming crucial in 2026. As someone who's spent a career designing, I agree the role is shifting toward curation. Yet I'm not on board with the anti-AI movement's knee-jerk rejection of good UX. Right now it seems to mean surfacing "authenticity" even at the cost of inferior experiences or overly traditional designs.

What I'm seeing from AI agents like Claude is something different—a tastefulness that comes from best practices. I don't see degradation in the actual user experience or screen layouts being produced. Instead, I see consistency and UX features that represent genuine improvements. We should celebrate that.

I don't want to return to Comic Sans just to prove a human did the work, do you?

Art & Science

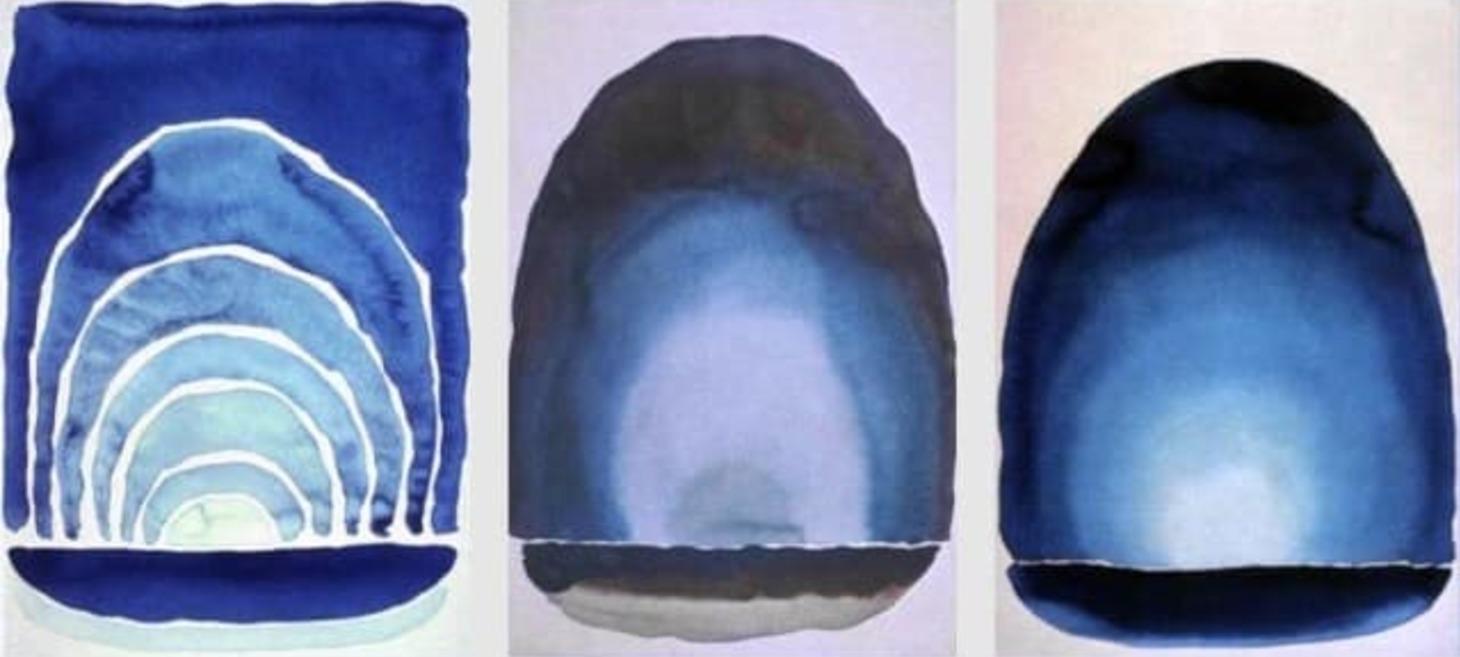

The Color of Wonder and the Chemical Code of Creation

What if the color we see isn't really there at all? Maria Popova, writing at The Marginalian, explores how blue—the color that defines our sky and planet—exists only through absence, through the wavelengths objects reject rather than absorb. From blue jay feathers tessellated with light-reflecting beads to the structural geometry of butterfly wings, nature has engineered elaborate optical tricks to create a hue that barely exists as true pigment. She traces our relationship with blue through Humphry Davy decoding Egyptian blue's chemical formula, to NASA's Voyager capturing Earth as Sagan's "pale blue dot," to the accidental discovery of YInMn Blue—the first new inorganic blue pigment in two centuries.

What strikes me most about this piece is how Popova reveals beauty not just in what we perceive, but in understanding the invisible mechanics behind it. The essay becomes a meditation on perspective itself—how creating new ways of seeing lets us glimpse what was always there but hidden.

There's something profound in knowing that blue doesn't truly exist in nature the way we think it does, yet it defines how we see our entire world. Perhaps the most meaningful experiences require similar shifts in perspective—learning to observe the wavelengths we usually miss.

What other certainties might dissolve if we looked beneath their surface?

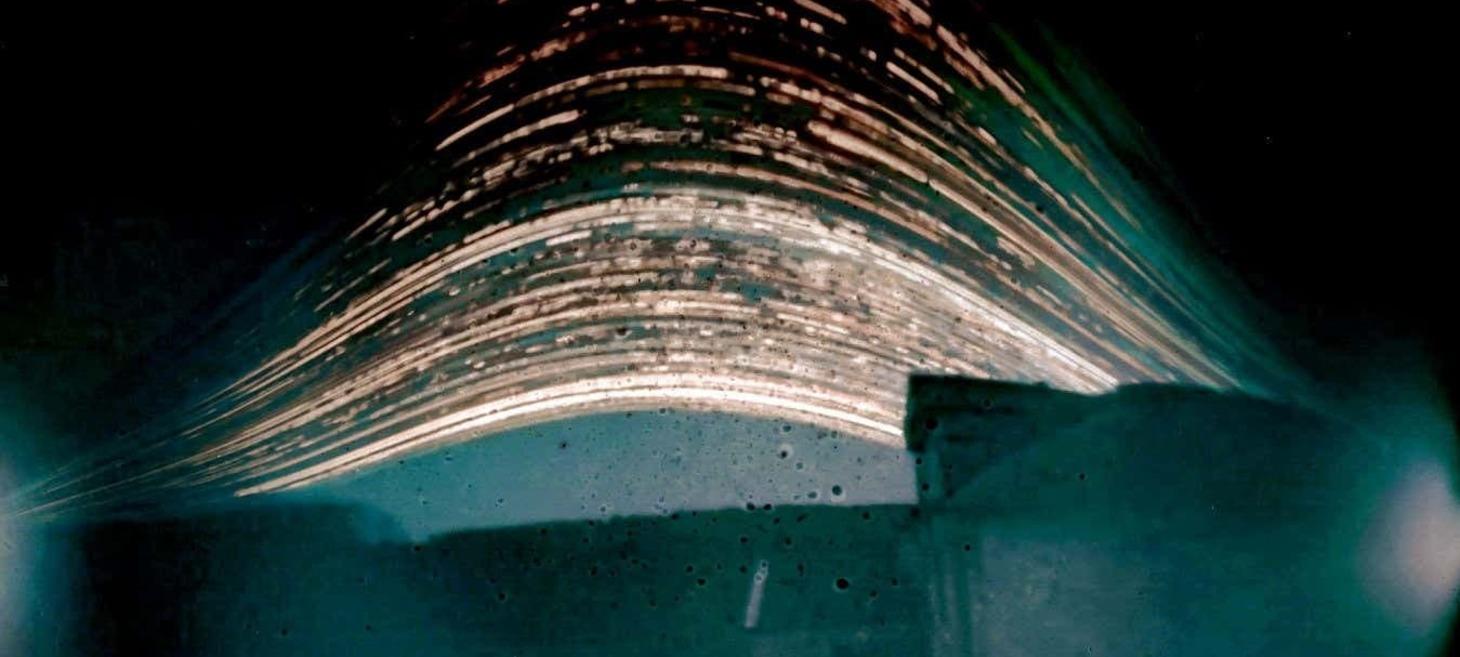

Artists Gaze into Space in Stunning New Exhibition

Artists and astronomers both translate what we can see into stories we can tell—and at Bristol's Royal West of England Academy, that shared perspective takes stunning visual form. Cosmos: The art of observing space, which runs through April 19, features works that merge technical process with creative vision, from Janette Kerr's solargraphs—18-month sun exposures captured in tin can pinhole cameras—to Alex Hartley's fusion of recycled solar panels with photographs of Neolithic standing stones, as reported by New Scientist.

I've been drawn to these kinds of art and science intersect stories lately, especially when they involve space. What gets me is that shift in perspective—when a scientific process produces something visually stunning and beautiful, it touches me the same way an intentional work by a singer-artist does. The result moves me regardless of whether it came from pure artistic intention or emerged from the logic of science itself.

These works show how the boundaries between scientific observation and artistic creation keep blurring in ways that benefit both. Ione Parkin, the exhibition's curator, writes about recalibrating our perspectives through "the sustained gaze"—whether that's nights spent stargazing or poring over data.

How much does intention matter when the outcome resonates so deeply?

Art and Politics

At Berlinale 2026, Artists Refuse the Comfort of Neutrality

When filmmaker Wim Wenders told the Berlinale press that artists should "stay out of politics," he probably didn't expect what came next: Arundhati Roy withdrawing, Kaouther Ben Hania refusing her award on stage, and 80+ filmmakers including Javier Bardem and Tilda Swinton signing an open letter. Eve Rogers reports for Impakter that the controversy centers on Gaza—but the structural problem runs much deeper.

What interests me here is the funding architecture. The festival draws 40% of its budget from the German government and corporate partners like TikTok. How can an institution claim political independence when its existence depends on state and corporate money? We've seen this pattern in the U.S., where arts funding gets cancelled or championed depending on whether the work is deemed "woke" by whichever administration holds power.

Gaza is just one example, but it exposes a fundamental question: Can large cultural institutions maintain genuine political independence when they're funded by the very forces they might need to critique? The neutrality Wenders advocated for isn't really neutrality—it's alignment by default.

Maybe the real story isn't whether art should be political, but whether publicly-funded institutions can afford to be honest about whose interests they actually serve.

The Last Word

The Last Word

Thanks for sticking with me through the break and this somewhat scattered collection of stories. I'm curious what resonates with you—especially if you're feeling that same tension between appreciating systems that work well and missing the weirdness they tend to eliminate. Hit reply and let me know what you're thinking.

Best, Juergen